AI Governance Software

Riskonnect’s AI Governance software helps you apply a structured methodology to demonstrate control of AI use – without limiting innovation.

Use AI with confidence. Minimize risk by maintaining proactive, real-time oversight as AI scales across the organization.

Prove AI compliance – and avoid penalties. Automate governance processes, maintain audit-ready records, and align with evolving regulations.

Ensure AI integrity and accountability. Centralize AI risk detection so teams across the organization – legal, procurement, security, etc. – can quickly determine if AI supports or threatens business goals.

AI Governance

Product Highlights

Confidently Comply

with AI Regulations

Worried about incurring penalties for accidentally violating evolving AI regulations? Riskonnect’s AI Governance software comes preloaded with frameworks and regulatory standards to help you stay ahead of compliance requirements, so you can focus on innovation — not red tape.

- Leverage built-in regulations and frameworks like the EU AI Act, GDPR, ISO 42001, and NIST AI RMF.

- Run automated audits to ensure transparency and accountability across AI systems.

- Tailor governance policies to align with industry-specific risks and organizational needs.

Proactively Monitor

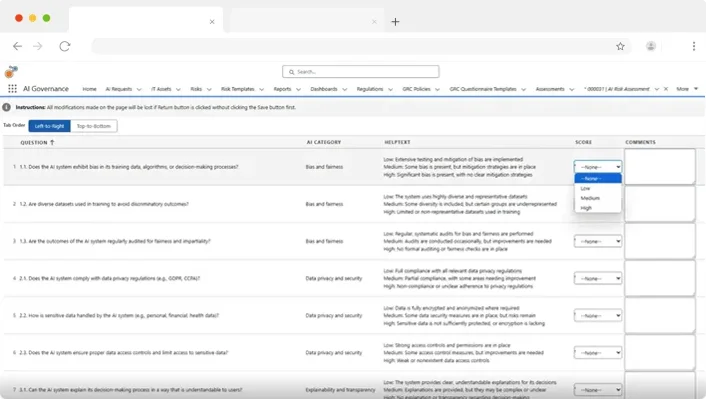

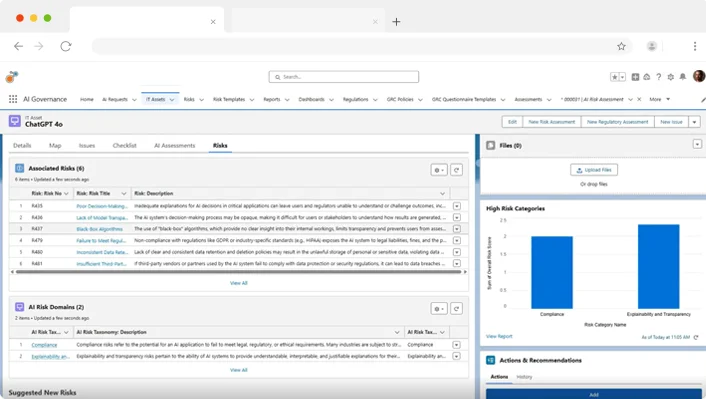

AI Risk and Ethics

Can you identify bias in AI output before it causes harm? Riskonnect’s AI Governance software continuously monitors your AI systems for ethical integrity, compliance, and assurance, giving you the insight to act before risks escalate into problems.

- Conduct control testing to detect bias, model drift, and anomalies early.

- Trigger automated alerts when deviation or compliance risks arise.

- Score AI risk and build mitigation plans to ensure reliable governance outcomes.

Scale AI Across

the Enterprise without Fear

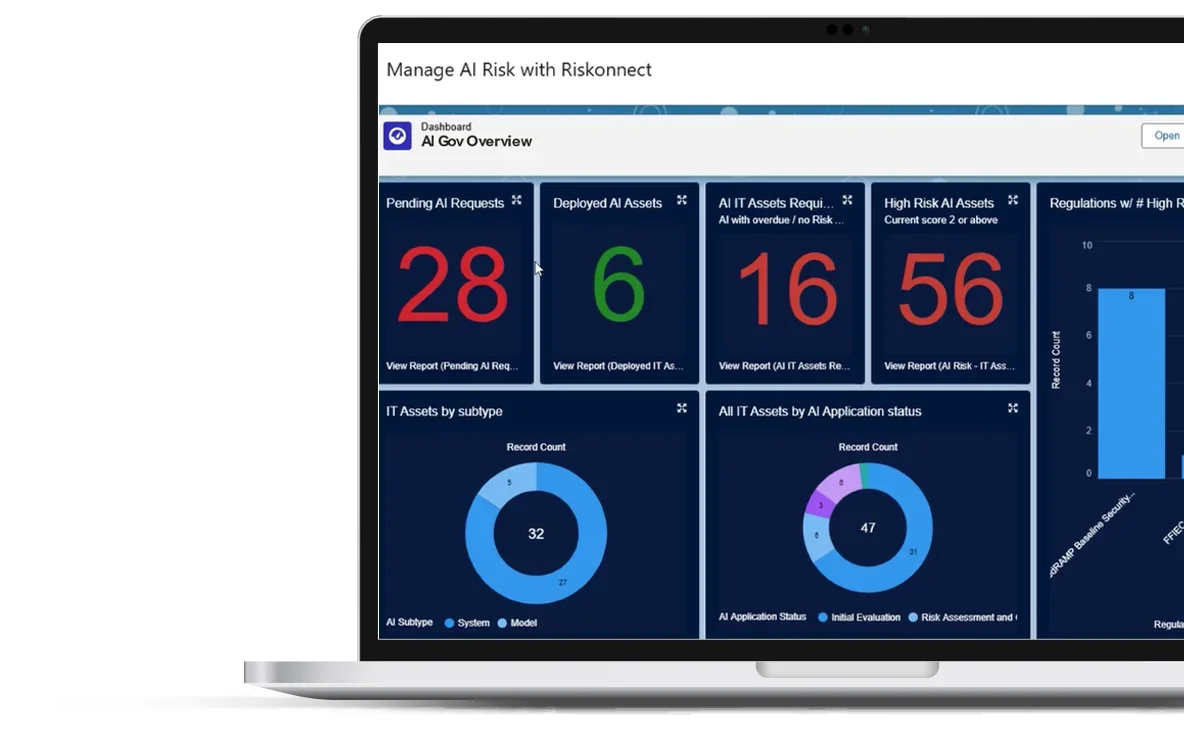

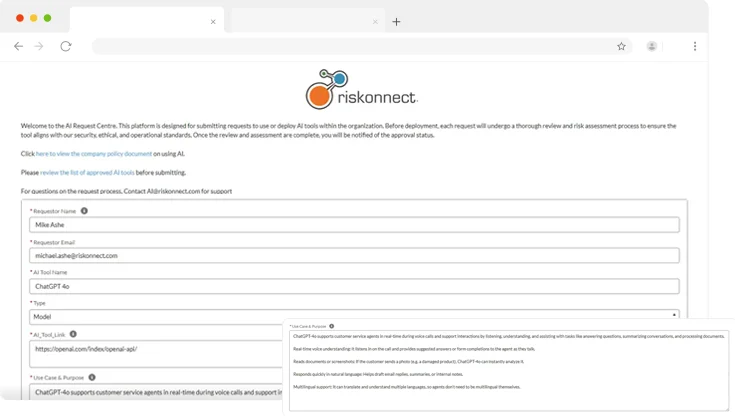

Are you equipped to effectively govern AI as usage scales? Riskonnect’s AI Governance software provides the structure and visibility to allow safe experimentation and adoption across departments and geographies — all from a single platform.

- Manage the full AI model lifecycle with built-in version control.

- Access real-time dashboards for a clear, enterprise-wide view of AI governance.

- Integrate seamlessly with enterprise risk management systems to unify oversight.

Get Started with These Helpful Resources

Industry Recognition for Riskonnect

Your AI Governance Software Questions Answered